Documentation Index

Fetch the complete documentation index at: https://nuggets.life/docs/llms.txt

Use this file to discover all available pages before exploring further.

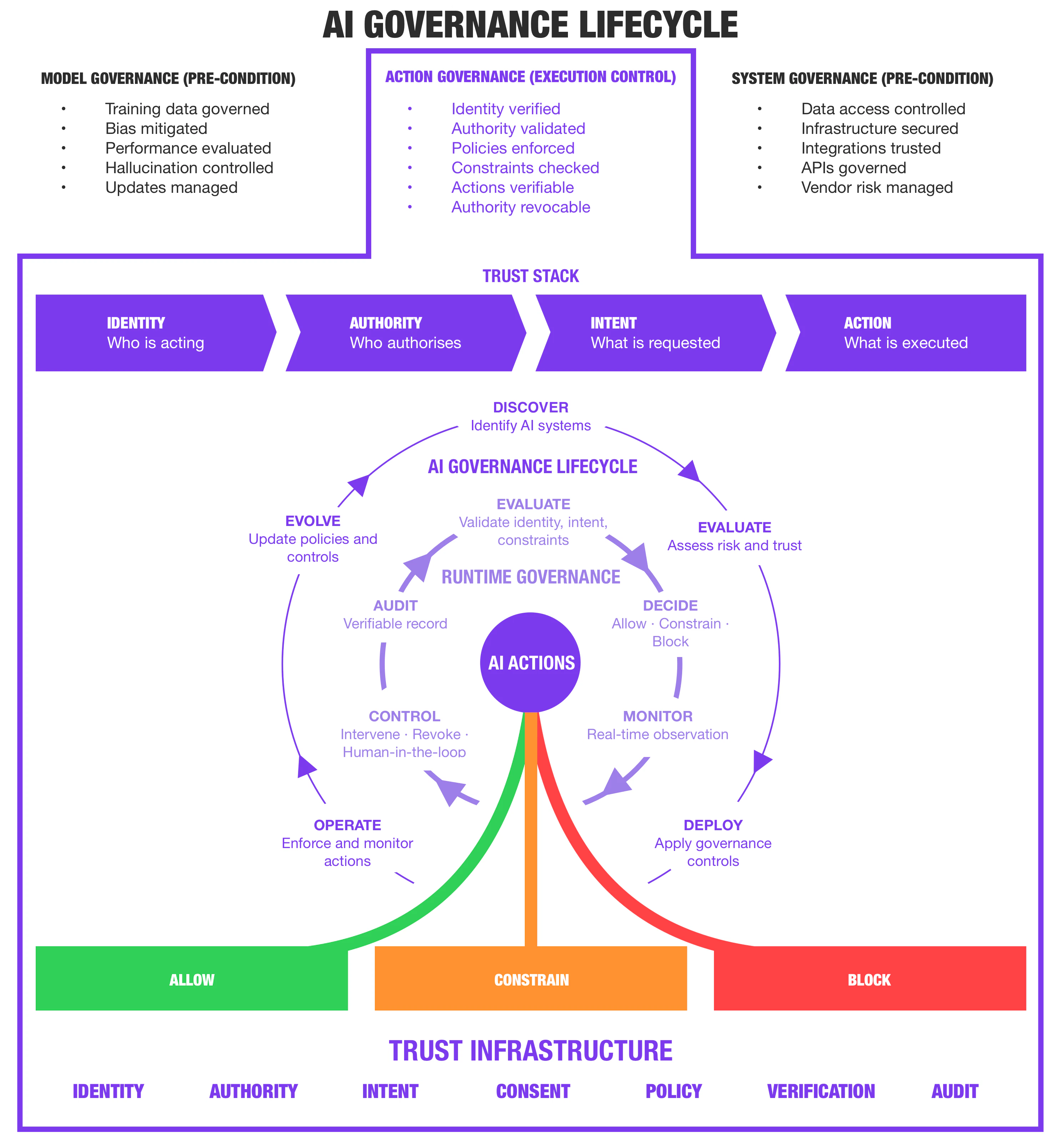

Governing AI at the point of execution

AI systems are no longer just generating outputs.They are taking actions across enterprise systems, data and infrastructure. Enterprises can control who accesses systems.

They cannot prove what AI does once inside them. If an AI system in your environment executed a harmful action today, could you prove:

- Who authorized it?

- What constraints applied?

- Whether it should have been allowed?

The problem

Traditional identity and security models were designed for a world where humans interact directly with software. They answer who has access and what permissions they hold. They do not answer who authorized an AI action, whether it should execute, whether it complies with policy and constraints, or whether it can be proven to an auditor or regulator. As AI systems begin operating autonomously, this becomes the primary risk.The gap

Two governance layers already exist. One is missing.Model Governance

Ensuring models are safe, reliable and fit for purpose. It is not the control point.

System Governance

Ensuring infrastructure, data and integrations are secure. It is not the control point.

Action Governance

Determining whether AI actions are authorized to execute, enforcing that decision in real time, and producing verifiable evidence.

This is the control point. This is what existing frameworks do not address.

The framework

The Enterprise AI Governance Framework introduces Action Governance as a new control layer for AI systems that act. It operates after access, before execution. Every AI action is evaluated through a verifiable chain of responsibility:These elements are assessed together at the point of execution. The decision is enforced in real time and recorded as verifiable evidence.

1. Classify

Map your active AI deployments against the risk tiers in the framework. Identify which systems are already operating at the High or Critical tier — systems that execute actions, not just generate outputs.

2. Gap-assess

Can you verify the identity of every AI actor? Prove the authority it was operating under? Produce tamper-resistant audit evidence on demand? If the answer to any is no, that is your governance gap.

3. Prioritize

Establish identity and delegated authority controls for your highest-risk systems first, before expanding to full policy enforcement and runtime governance. IAM is the foundation. Action Governance is the next layer. Both are required.